Introduction

The Binary Tree Cameras problem focusses on the binary tree data structure, a form of graph. The high-level aim being to add a ‘camera’ to the least number of nodes such that every node is either a camera or has an edge that connects to a node with a camera.

Whilst this problem focusses on the tree structure, graphs are used extensively as the data structure behind many of the digital services we interact with on a day-to-day basis, such as:

- Facebook Graph – modelling your friendships, posts, likes, comments, etc. to help provide a better user experience (i.e. Simon and John are both connect to the Sarah node, I’ll recommend John as a friend to Simon)

- Google Maps – a road network is just a set of vertices with edges where you can transfer from one road to another. Google may use a graph to determine how to get from from vertex A to vertex B (with perhaps the edges containing information such as maximum speed, traffic volume, etc.). This is similar to routing protocols used on many corporate LANs / MANs

It’s obvious a good Software Engineer needs to understand trees and more generally graphs.

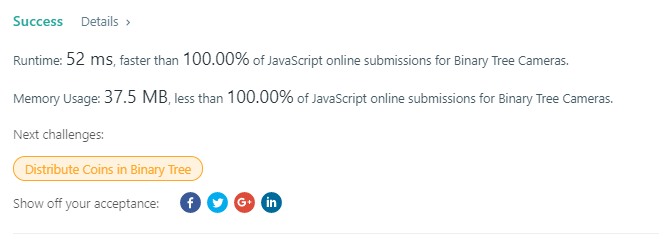

This post outlines my O(n) solution – every node of the graph is visited exactly once. This solution is faster and more memory efficient than all other accepted JavaScript solutions on LeetCode.

Approaches

Due to the use of a Binary Tree data structure, there are a number of constraints on the problem which make designing a solution a little easier (although I guess you don’t know what you don’t know, so I’m sure there’s a better way of solving this problem than mine!).

Ultimately you need to start at a node, traverse the tree in some way, and make decisions as to where cameras should be added – there are therefore three techniques:

- Start from root node (Root-Down) – start at the top and work your way down to the leaf nodes.

- Start at a random node – not sure how this would be sensible approach, but it’s an option!

- Start at the leaf nodes (Leaf-Up) – starting at the leaf nodes, work your way back up to the root node.

Whatever approach is taken to solve the problem, there are two algorithmic approaches for traversing the tree:

If you’re interested in learning more about tree structures, understanding traversal techniques such as breadth-first and depth-first are also important – particularly as tree search techniques.

I believe recursion is the simplest technique for navigating a graph – however, it does have one major disadvantage; whether programming in a high-level language such as Java or writing in low-level assembly, the stack is a limiting factor. A stack grows upwards and has a reserved area in the main memory of a computer – processes are assigned a portion of the stack area in memory to grow into, and the growth occurs when, amongst other things, function calls are made.

When a function is called, essentially (true enough for the purpose of this paragraph) the state of the current function is pushed onto the stack, as well as a return address and the parameters sent to the next function (known as the stack frame). When the new function returns, everything is popped off, and the code continues from where it left off with the correct state. However, if a function doesn’t return and more and more function calls are made (i.e. a very large tree), too many stack frames are pushed onto the stack and the stack limit is breached causing an exception. This limit is often lower than you expect. You can see how this can be an issue for recursive solutions.

Whilst I had this issue in the back of my mind, I decided to progress and learn more about the problem using a technique I was comfortable with; thankfully none of the test cases breached this limit! However, for this reason, I’m not the biggest fan of my solution – I know it will fail for large binary trees.

Root-Down

Often times the best way to solve a problem is to just get started – whilst this technique may often not result in the final solution, it will provide an opportunity to learn more about the problem, rather than trying to theoretically think through the entire solution.

I began to solve this problem using a root-down approach but quickly realised the logic was booming too complex – demonstrated through the example below:

The algorithm may initially think the first node doesn’t have a camera and isn’t covered by one itself, so it makes sense to put one down. We then go down the left and right sub trees, following the rule such that if a node is ‘covered’ by a camera, don’t put a camera down.

The most efficient number of cameras for the above solution is 3 however, not 4. How could this be solved? We could implement some sort of lookahead, but it’s no longer a truly recursive solution, and certainly not O(n).

What about starting at the leaf nodes? We need some knowledge of the structure of the tree to make efficient decisions.

Leaf-Up

If you recursively visit each each node until you reach a leaf node (of which there could of course be multiple), you can begin to give the parent nodes some context as to whether or not a camera should be added. Implementing this algorithm will result in the below solution for the problem introduced above:

By modifying the rule slightly – if as a node, you’re covered by a camera or your parent is covered by a camera, do not place a camera if you have children nodes. You can see how this rule related in an efficient placement around A->B and A->E.

By starting at the leaf nodes, you can provide context to the decision making process which results in the efficient placement of cameras.

Analysis

This problem wasn’t particularly hard, but it did provide a couple of learning opportunities which are discussed in this section. They are:

- Auto-generated Unit Testing

- Optimising code, and

- Parallel Computing

Auto-generated Unit Testing

The benefits of Test Driven Development (TDD) are well known, but as with the majority of testing approaches, it relies upon a human defining n number of scenarios that test the various use cases. It can be difficult if not close to impossible to tell whether you have the right level of coverage, because you don’t know what you don’t know. Solving LeetCode problems involves writing some code, running the tests, realising you didn’t cater for a certain scenario, updating your solution, and repeating. What if we weren’t given the outputs of the LeetCode tests? We were just told whether the solution is correct or not.

Thinking of this scenario and the fact that as humans we don’t know what we don’t know, would auto-generated unit tests be feasible? Could we let some code randomly create binary trees that we test against? The process would be:

- Software creates a binary tree

- Human inputs the expected value (so will only work for moderately sizes trees)

- Solution executes and is compared against expected value

In this way, we don’t need to rely on human intelligence to understand the various scenarios… but how likely and how quickly will auto-generated unit tests find errors in a solution? This obviously depends on the complexity of the input you’re generating, but in this scenario anything more than 100 unit tests would probably begin to annoy me.

The code to run these automated unit tests is available on GitHub; by ‘breaking’ various parts of my solution (to mimc the development process outlined above), auto-generation of unit tests found these issues after, on average, 2.5 rounds. That took me by surprise – this could be a really useful technique going forward.

So if you’re working for Facebook and you want to test your recommendation engine, you could auto-generate social graphs to compare an updated solution output to the existing solution. If you work for Google and you’re updating your directions functionality, will you remember to write a test that goes through a small village with a railway crossing that stops cars for 5 minutes when the barriers are down? Does your updated directions functionality take account of this scenario? Again, the success of this approach depends on the probability of auto-generation creating a good distribution of units tests, but it’s an interesting thought.

Optimising Code

What about performance? What makes a solution faster than 96% of solutions as opposed to being faster than all other solutions? As it turns out, not much. The usual suspects always play a part (including as discussed in a preview blog post, the shared LeetCode execution environment):

Reduce (or better yet, eliminate) logging. Logging in NodeJS leaves the NodeJS environment (event loop) – this can be a costly process. Often time pre-compiler directives (in C for example) can be used to eliminate certain logging statements from even making their way into the final executable, potentially reducing an application size so much that it can fit entirely into main memory, with the benefits that brings.

Stop Assigning variables that aren’t used (or no longer serve a purpose) – the Node object of the input to the solution contains a val property – I used this property to identify which nodes had cameras (0 and 1) when logging. Once I had a working solution, this was a wasted operation… so remove it.

Think about your code and how it will be compiled down (or interpreted) – if you think about the code block below, we’re assigning two variables, executing some conditional statements, and again assigning some variables a value.

var leftResp = null

var rightResp = null

if (node.left) {

leftResp = recurseNode(node.left, true)

}

if (node.right) {

rightResp = recurseNode(node.right, true)

}If this was in a compiled program, we’d have:

- Some stack operations to reserve address space

- Conditional logic including varying JMP commands as well as truth logic

- Further stack operations (calling functions, assigning values, etc.)

Compare that with the below code which achieves the same:

var leftResp = node.left && recurseNode(node.left, true)

var rightResp = node.right && recurseNode(node.right, true)If this were to be compiled down, we’d have:

- Some stack operations to reserve address space

- Some some truth logic

- Some more stack operations (calling functions, assigning values, etc.)

Now whilst the AND statement may result in some JMP commands to ensure the rhs does not execute if the lhs is false, this refactoring did result in a faster execution time. At a high level, less CPU instructions results in a faster execution time.

Parallel Computing

How else could we improve the performance of the solution that isn’t just optimising code? We could parallelise the solution. Whilst NodeJS is inherently single threaded following an event loop architecture, it does support support multi-threading through Workers. Could the solution be modified such that where a node branches off with 50% of nodes on one branch and 50% on the other (in the ideal world), we spin up an additional worker to execute in parallel (note not concurrently, we’d be making use of multi-processor architectures). However, much as calling functions and the resultant context switching has a performance impact (and generally makes recursive solutions slower than their iterative counterparts), so too does parallelising your code. Creating and destroying threads is a costly process.

Whilst parallelising the solution would have the aim of improving execution time, it has no impact on the computation complexity, it would remain O(n) as we must still visit each node once.

Conclusion

As with anything in life, knowledge and experience go hand in hand. To make the best decisions, you need both – ultimately a lack of experience will lead to errors and therefore more experience. As a Software Engineer, this experience leads to an intuition whereby you can tell when it’s worth abandoning a solution and looking elsewhere. Following a Root-Down approach, I got the feeling the solution wasn’t as clean as it could be (simply down to lines of code), so I decided to try something else. Trusting your intuition as a Software Engineer is extremely important.

Further to this, you may want to develop the perfect solution, but sometimes this is not possible and / or required. In this example I know my recursive solution wouldn’t work for extremely large trees, however it meets all the requirements outlined by the LeetCode Unit Tests. Understand (which often involves communicating with stakeholders) whether the scenarios not covered by your solution are likely to ever occur and are therefore worth the extra investment to cater for.